See all blog posts

See all blog posts

Modernizing Java EE apps to cloud-native with MicroProfile, Jakarta EE, and Open Liberty

This post is about the technical possibilities provided by Open Liberty and why I’m very confident that you can go very smoothly from a Java EE application to a cloud-native one. I won’t be talking about splitting monoliths into microservices, domain-driven design, or event storming. I’m a senior consultant for cloud-native topics and have worked on Java EE applications with servers like IBM’s WebSphere Application Server (WAS) or Red Hat’s JBoss EAP. I advise companies to move to cloud technologies and to pick the right tools for their environment.

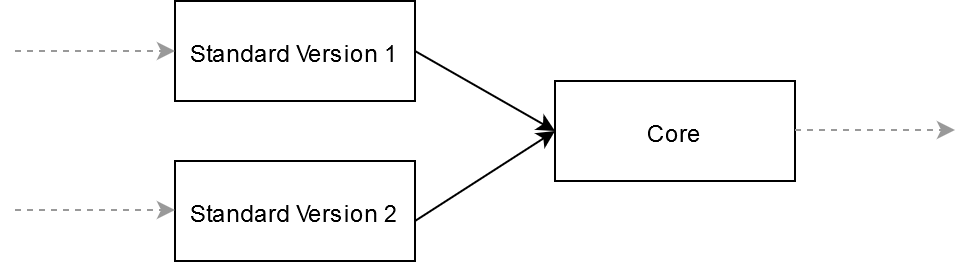

I recently had the task of modernizing an application. It was a Java EE 6 app running on WAS v8. The app itself is a kind of dispatcher. It has a SOAP endpoint to receive standardized XML-messages which it forwards to the desired service. One day, a second version of the XML standard was added. At this point it was easier to split the app in multiple services. At the front were two services (one for each version), which parsed the message. The core service made some transformations and distributed the message to the business services.

As a first step it is always a good idea to get rid of cumbersome traditional application servers. They are stable, high performance environments, but they need a lot of time to start and are hard to configure. If you are using traditional WAS you should move to Open Liberty (or WebSphere Liberty, which is built on Open Liberty). With some limitations (e.g. remote EJBs) it should be possible to easily run your app on Liberty without major adjustments. In fact, some of our clients are still using WAS. As developers, we use Liberty to build our apps locally, while in other environments, we use the client’s setup.

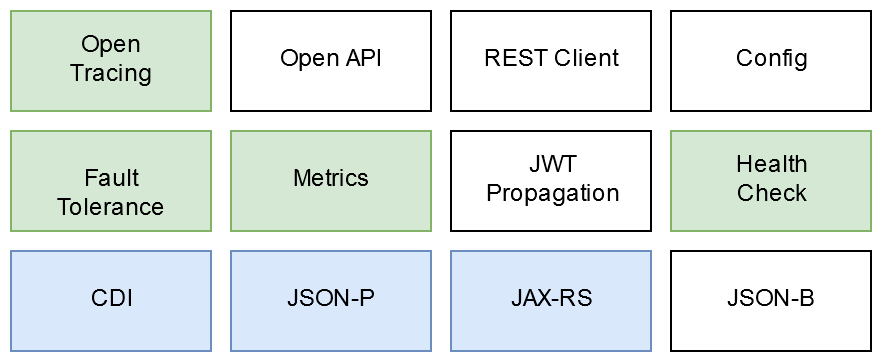

Liberty enables you to add features from Java EE or Jakarta EE alongside features from MicroProfile. The image shows the MicroProfile features:

-

Blue blocks are good known features from Java EE.

-

Green blocks are the ones we used in our migrated microservices. I will be discuss in this post why they are useful.

-

White blocks are other MicroProfile features that are worth looking into but weren’t used in this app.

Resiliency in microservices with MicroProfile and Open Liberty

When you create microservices those microservices communicate with each other. Sometimes you have to use synchronous RESTful calls (as we did from the standard services to the core service). In this situation you should use resilience in your microservices. If you don’t, a broken application can break every app in your calling chain, especially when the response times are long. With MicroProfile you can add resilience to your infrastructure, including defining timeouts in your REST client and using circuitbreaker architectures by just adding annotations to your app code.

If you disagree and say that resilience is the responsibility of a service mesh, you’re right, but you might not yet a service mesh installed in your infrastructure. When you do install a service mesh, I’ve got good news yor you: Open Liberty and MicroProfile integrate well with Istio and recognize the configurations from the mesh. If there are configurations on both the app and the service mesh the service mesh overrules the app. But the service mesh cannot provide fallback resilience. This is because you need business logic to react to errors with solutions (fallbacks) such as caching, returning default values, throwing errors with descriptive error messages, and so on.

Building 12-factor apps with MicroProfile and Open Liberty

If you want to develop a cloud-native application, it is a good idea to look into the 12 factors app paradigm, which provides guidlines on how to build a "Software as a Service" application. Emily provides a good overview. It is important that you prepare your application for cloud platforms and their requirements. I consider "lift-and-shift" to be an anti-pattern. Lift-and-shift just means that you run your application on a different virtual machine, but it’s hard - if not impossible - to benefit from the advantages of elastic scaling, self-healing, or zero-downtime deployments. A better approach would be using the strangler pattern, in which you create a new system around the old system, gradually letting the new system grow over a few years until the old system is "strangled".

Providing observability with MicroProfile Metrics in Open Liberty

MicroProfile provides you with features that you really should look into when developing cloud-native apps. Features like MicroProfile Metrics. When you have multiple, maybe hundreds or thousands of apps running simultaneously, you have to monitor them. Metrics help you out. The applications provide monitoring systems like Prometheus (and Grafana for the UI) with information that you can analyse and add alerts to it. You can probably get metrics like RAM or CPU usage, or the number of restarts, from the cloud platform (e.g. Kubernetes does this). Furthermore, you can create business-driven metrics like "how many orders are placed in my shop" or "how many logins are made in this time" and so on. Be creative! You get new possibilities with this feature. In our case, we added metrics to know which standard version was called with which metadata. That helped us with migrating the clients and business services to the new version.

Tracing microservices with MicroProfile OpenTracing and Open Liberty

Another important topic is tracing. When you create a microservice infrastructure, you end up managing services that communicate synchronously and asynchronously. Either way it is important to be able to follow the path of communication, especially when diagnosing bugs. Imagine you have to find the bug in a calling chain containing 10 apps. Maybe the 10 apps are implemented by different teams. This is very hard with no further support. You can handle this by passing a trace ID to the message and sending this informations to services like Jaeger or Zipkin. MicroProfile supports you with a MicroProfile OpenTracing implementation. Ideally, you trace the whole way. In our case, we trace not only the call from the standard service to the core service, but also at least from the client calling the standard service to the services called by the core service.

Checking the health of microservices with MicroProfile Health Check and Open Liberty

Last, but not least, PaaS cloud platforms like Kubernetes offer self-healing. Kubernetes checks for "liveness" and, if your application is in an unstable state, reboots the app for you. Kubernetes also checks for "readiness" before adding your app to the load balancer so that it can receive traffic. However, Kubernetes defines a pod as ready to receive work when the container is started - not when the server with the app is started and ready. This is very important: imagine scaling your app so that a new instance is created and started. If Kubernetes doesn’t check the readiness of the app itself, though, the first requests will fail because the server and the app aren’t started already. You can help Kubernetes by giving it endpoints in your app to provide information about your app’s readiness.

Convert your Java EE apps to cloud-native apps on Open Liberty

Overall, Open Liberty is a good choice to stepwise conversion of your Java EE applications to the cloud-native paradigm. You can add one feature at a time while you refactor your app to comply with the 12 factors and get rid of older technologies like remote EJBs.